Why Most AI Projects Fail Before They Start

Everyone is talking about AI, on LinkedIn and everywhere else, too. And for good reason. The AI market is growing fast. Vendors are multiplying. Budgets are being allocated. Announcements are being made. And quietly, in organizations across the country, AI initiatives are failing.

I think everyone has seen the news from MIT about 95% of AI initiatives failing. The methodology has been debated — the number really reflects sustained organizational ROI, not whether the technology worked. Fair critique. But even the more conservative estimates tell a story most organizations don’t want to hear: AI initiatives, whether you build or buy, are failing at rates that should give any leadership team pause. That failure doesn’t just show up in a line item in the books, it shows up when staff resists the next thing leadership wants to implement.

Now, the thing I keep seeing that honestly is driving me nuts is the why. I am a project manager and independent consultant, so seeing the whole system is natural to me. But over and over again I see otherwise really smart people saying that when these initiatives fail, it’s because of x. One reason. Maybe it’s context. Maybe it’s corporate buy in. Maybe it’s lack of success metrics. Regardless, too many people think they have a handle on the ONE reason these initiatives are failing.

I’ll tell you what. Failure is happening not at go-live, not during implementation, but long before anyone writes a line of code or configures a single workflow.

The failure happens in the planning phase. Or more accurately, in the absence of one.

I don’t think this is a whole lot different than other technology shifts we’ve been through. This isn’t a technology problem. The technology, in most cases, works. AI tools, even for healthcare, sometimes especially for healthcare, classically the laggard of the group, have matured significantly over the last several years, and what’s available today — from ambient clinical documentation to predictive risk stratification to revenue cycle automation — is genuinely capable of delivering value in the right environment.

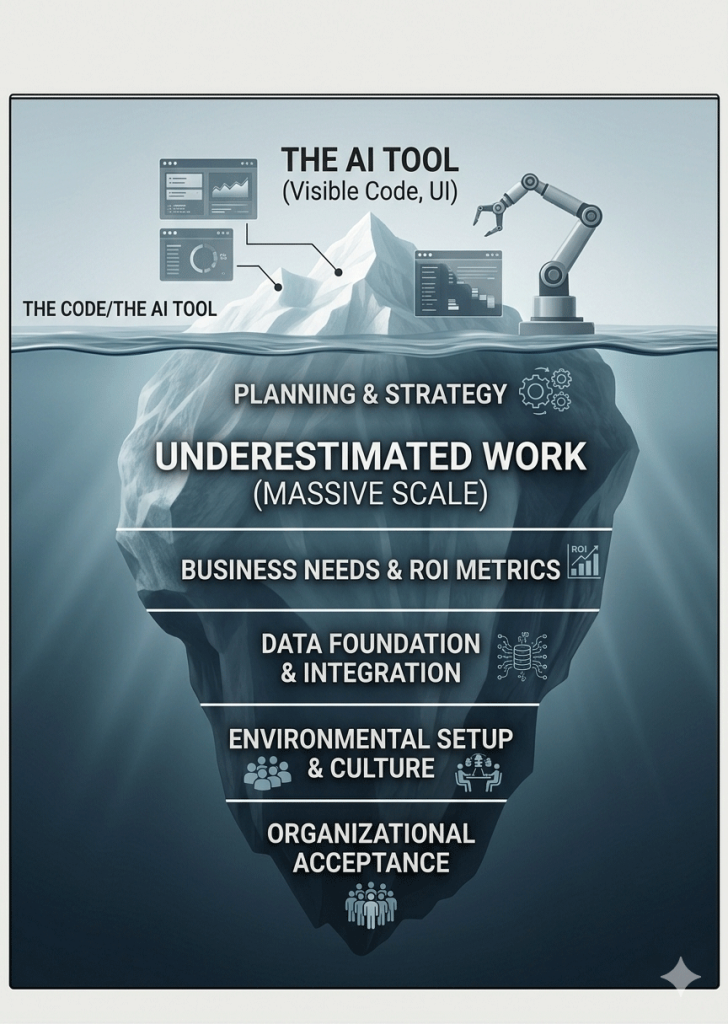

The environment is the problem. And building the right environment is work that most organizations either underestimate, skip entirely, or hand off to a vendor who has no incentive to tell them it isn’t done yet.

Here’s what that failure actually looks like — and why it keeps happening.

Failure Mode 1: The Problem Isn’t Defined

The most common starting point for a failed AI initiative is a leadership mandate that sounds like this: “We need to be doing something with AI.”

That’s not a problem statement. It’s pressure — pressure from a board that read something in a trade publication, from a health system competitor that made an announcement, from a vendor who got a meeting with the CEO and made everything sound inevitable.

Pressure is not a strategy. And “doing something with AI” is not a project.

The organizations that succeed with AI start with a specific, concrete problem they are trying to solve. Not “improve operational efficiency” — that’s a category, not a problem. Not “leverage AI to enhance patient outcomes” — that’s a press release. A real problem sounds like: “Our clinical staff spend an average of two hours per day on documentation that doesn’t require clinical judgment, and that time is coming directly out of patient-facing care.”

That’s a problem you can design a solution around. That’s a problem you can measure. That’s a problem where you can evaluate whether the AI tool you’re considering actually addresses it — or whether it addresses a slightly different problem that the vendor happens to have a solution for.

When the problem isn’t defined, every vendor looks like a fit. Every demo is impressive. Every ROI calculator produces a compelling number. And the organization ends up selecting a tool based on the quality of the sales presentation rather than the relevance of the solution.

Failure Mode 2: The Organization Isn’t Ready

Many organizations have a particular tendency to conflate enthusiasm for readiness. Leadership is excited. The strategic plan mentions AI. A committee has been formed. Surely the organization is ready to move.

It isn’t, unless it has actually done the work of assessing readiness — honestly, specifically, and across all the dimensions that matter.

People readiness: does the workforce have the skills to work with AI tools? Is there genuine buy-in, or is there surface-level compliance masking significant skepticism? Have the leaders who will make or break adoption actually been engaged — not informed, but engaged?

Process readiness: are the workflows that AI will touch stable, documented, and consistently followed? Or are they ad hoc, variable, and only partially understood by the people doing them? AI applied to an unstable process doesn’t stabilize it. It accelerates the instability.

Data readiness: is the data that the AI tool needs available, clean, and accessible? In healthcare, this question has a compliance dimension as well — is that data governed appropriately under HIPAA, and are the necessary agreements and controls in place to use it?

Technology readiness: can the existing infrastructure support the implementation? Are the integration points understood? Are the security requirements met?

Each of these dimensions can independently derail an AI initiative. Most organizations assess none of them before committing to an implementation — because doing so requires honesty about gaps that nobody wants to report upward, and because the vendor’s implementation timeline doesn’t have room for foundational work that should have happened before the contract was signed.

Failure Mode 3: The Vendor’s Timeline Becomes the Project’s Timeline

Just like many other software vendors, AI vendors often have standard implementation timelines. They were built around a hypothetical customer — well-resourced, with clean data, a dedicated IT team, and staff who have bandwidth to participate in implementation activities on top of their regular responsibilities.

That customer is hard to find. It almost doesn’t even exist in most healthcare organizations. It definitely does not exist in most FQHCs, rural hospitals, and community health centers.

When a vendor’s standard timeline becomes the project’s timeline without adjustment for organizational reality, the project starts behind before it begins. Testing phases get compressed because the people who need to do the testing are also managing their sales pipeline or customer operations or patient care. Training gets rushed because there wasn’t enough time budgeted to do it properly. The go-live happens on schedule — because the timeline was fixed and the incentives pushed toward hitting the date — but the organization wasn’t ready for it.

A compressed, rushed go-live doesn’t produce a failed implementation. It produces a technically successful one that nobody trusts, nobody uses consistently, and that takes years to recover from if it ever does.

The organizations that avoid this failure mode insist on building their own implementation timeline — one based on honest assessment of their available resources, their existing operational demands, and what preparation actually requires. They treat the vendor’s timeline as a starting point for negotiation, not a constraint to be accepted.

Failure Mode 4: Nobody Owns the Initiative

Enterprise initiatives have sponsors. They have steering committees. They have project teams. They frequently do not have a single person whose job it is to make sure the initiative succeeds — who owns the outcome, not just the process.

Executive sponsors provide visibility and resources but typically don’t have the time or operational proximity to actively drive implementation. Vendors drive their piece — the technical deployment — but their definition of success ends at go-live, not at adoption. IT manages infrastructure and integration but doesn’t own workflow change. Mid-level leadership provides input but has a day job.

In the gap between all of those stakeholders lives the initiative — and without someone whose explicit responsibility is to bridge that gap, things fall through it constantly.

Decisions don’t get made because nobody is sure who should make them. Issues escalate slowly because there’s no clear path for escalation. Vendors miss commitments without consequence because nobody is holding them accountable. Staff raise concerns that never reach decision-makers because there’s no mechanism for surfacing them.

This is the failure mode that’s hardest to see coming, because on paper the governance looks fine. There’s a sponsor. There’s a committee. There’s a project manager updating a status report. The initiative looks like it’s being managed, right up until it isn’t.

The fix is simple to describe and difficult to implement: identify one person — an internal owner or an independent consultant without a stake in the technology decision — whose job is to own the outcome. Not to coordinate. Not to facilitate. To own. Ownership means having skin in the game — not just updating a status report, but answering for the outcome when things go sideways. That accountability is what keeps everything else from slipping.

Failure Mode 5: Success Was Never Defined

At the end of a healthcare AI implementation, someone in leadership will ask whether it worked. What happens next reveals whether the organization thought about this before they started.

In most organizations, it didn’t. Success was defined implicitly — a vague sense that things should be better, that staff should be happier, that some metric somewhere should have improved. When the question gets asked, the answers are anecdotal. People seem to like it. The vendor says utilization is up. The go-live was on time.

None of that is measurement. And without measurement, there’s no accountability — not to the board that approved the budget, not to the grant funders who may have contributed to the initiative, and not to the patients whose care the technology was supposed to improve.

Defining success before you start isn’t a bureaucratic exercise. It’s the thing that makes everything else possible. It forces clarity about what you’re actually trying to achieve. It surfaces disagreements about priorities early, when they’re cheap to resolve. It gives you the criteria to evaluate vendor claims, to assess your own readiness, and to determine whether the initiative delivered what was promised.

It also gives you something invaluable if the initiative struggles: a clear picture of where the gap is between where you are and where you intended to be, which is the foundation for course correction.

What Success Actually Requires

The common thread running through all five failure modes is not technical. It’s organizational.

AI initiatives are failing because organizations commit to technology before they’ve committed to the preparation that technology requires. To be sure, there are many large enterprise organizations out there that do all of these things well, and they succeed! Their success is the basis for understanding how to do all of these things well. However, there are other, usually smaller, organizations that haven’t learned these lessons yet. They are treating AI adoption as a procurement exercise rather than a transformation initiative. They move fast on the parts that feel like progress — selecting a vendor, signing a contract, scheduling a go-live — and skip the parts that are harder to show in a board presentation.

The organizations that succeed take a different approach. They start with the problem, not the solution. They assess readiness honestly before they make commitments. They build timelines around organizational reality rather than vendor convenience. They assign clear ownership. And they define success in advance so that everyone knows what they’re working toward.

None of that is complicated. None of it requires expertise that isn’t accessible. It requires discipline — the willingness to do the unglamorous preparation work before the exciting implementation work begins.

That discipline is what separates the healthcare organizations that are genuinely transforming how they operate with AI from the ones that are adding to a growing collection of expensive, underutilized technology investments.

The opportunity is real. Getting there starts before the contract is signed.

If you’re in the early stages of an AI initiative and want an honest assessment of whether your organization is set up to succeed, contact me. That’s exactly what an AI Readiness Review is designed to answer, and it can help you avoid the restart phase and compress total time to ROI.

TL;DR

Five reasons AI initiatives fail before they start:

- no defined problem

- no readiness assessment

- vendor timelines accepted without negotiation

- no single owner of the outcome

- no definition of success.

All five are organizational failures, not technical ones. All five are preventable.